Menu

- Products

- Content Platforms

- Content Services

- ABBYY Timeline

- ABBYY Vantage Connector for M-Files

- AutoRecords

- Elasticsearch

- Frevvo eForms & Workflow

- Dropbox Sign Integrations

- Intercode Intelligent Data Platform

- MENT: Advanced Document Generation

- M-Connect Construction Management

- M-Connect Digital Workplace

- Microsoft Modern Workplace

- M-Connect Field Services

- M-Connect Inspection Management

- Migration Accelerator

- Modern UI

- Redaction Service and AutoRedaction

- Smartlogic Semaphore

- Syl Search

- See All Products

- Solutions

- Accounts Payable Automation

- Business Process Automation

- Business Process Optimization

- Cloud Migration

- Content Advisory

- Content Migration

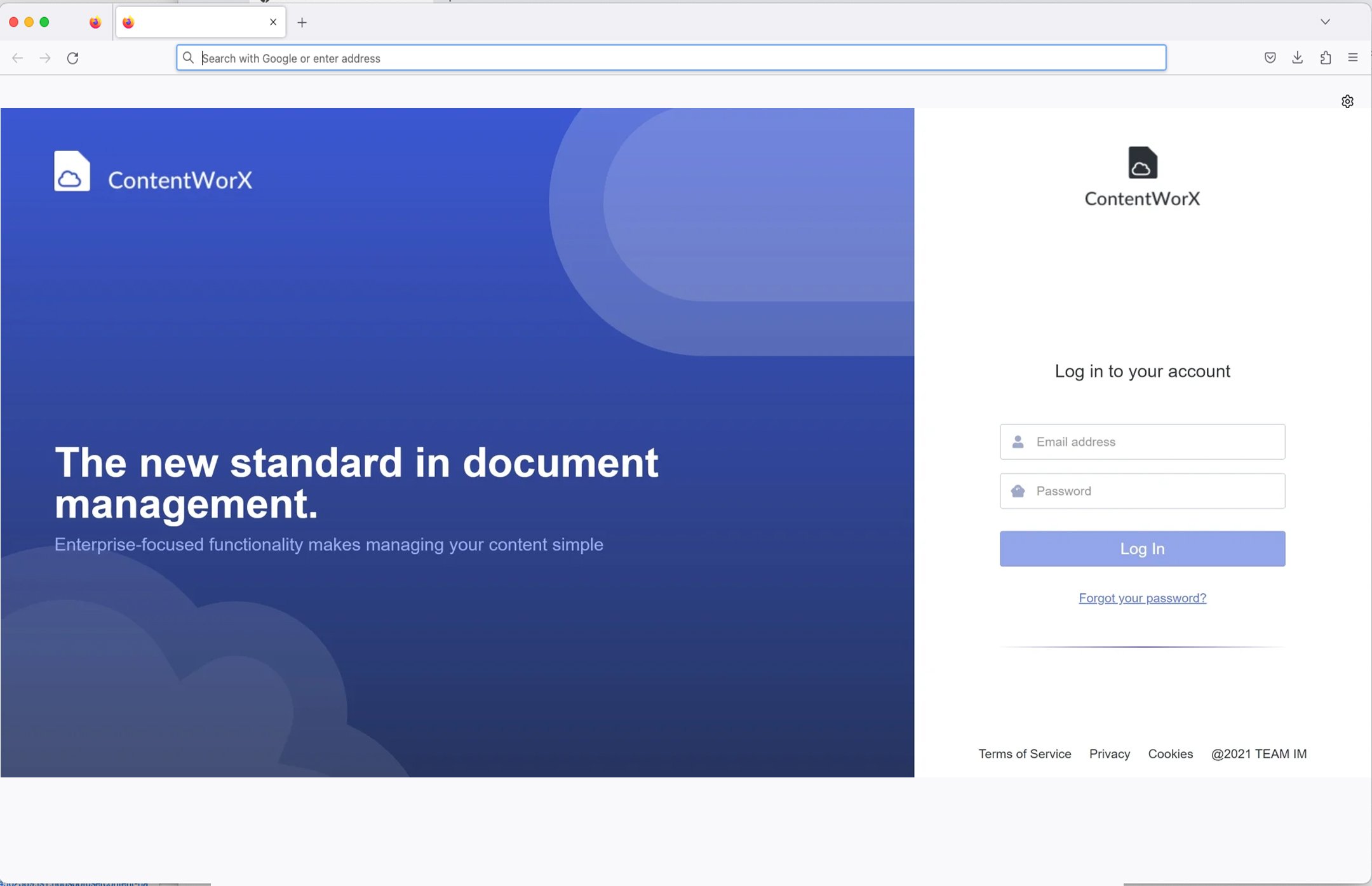

- ContentWorX

- Field Services / MobileApp

- Implementation Services

- Public Sector

- Security Solutions

- Support and Managed Services

- M-Connect Accounting Services

- File Analytics / Inventory

- See All Solutions

- Resources

- About TEAM IM

- Contact

- Visit: